Ship fast, optimize later: top AI engineers don't care about cost — they're prioritizing deployment

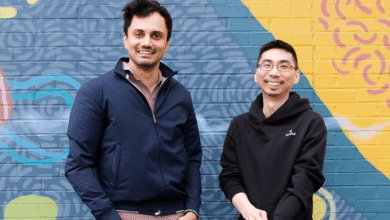

Across industries, rising computing costs are often cited as a barrier to AI adoption, but leading companies are finding that cost is no longer the real constraint. The tougher challenges (and the ones that are top of mind for many tech leaders)? Latency, flexibility and capacity. Bee MiracleFor example, AI only adds a few cents per order; the food delivery and takeaway company is much more concerned with cloud capacity with sky-high requirements. Recursionfor its part, aims to balance small-scale and large-scale training and deployment via local clusters and the cloud; this has given the biotech company flexibility for rapid experimentation. The companies’ real-life experiences highlight a broader trend in the industry: for companies using AI at scale, economics are not the key deciding factor; the conversation has shifted from how to pay for AI to how quickly it can be deployed and maintained. AI leaders from the two companies recently spoke with Venturebeat’s CEO and editor-in-chief Matt Marshall as part of VB’s travels AI Impact Series. This is what they shared.

Just wondering: rethink what you assume about capacity

Wonder uses AI to power everything from recommendations to logistics – but so far, reports CTO James Chen, AI only adds a few cents per order.

Chen explained that the technology component of a meal order costs 14 cents, the AI adds 2 to 3 cents, although that “goes up very quickly” to 5 to 8 cents. Yet that seems almost insignificant compared to the total operating costs. Instead, the 100% cloud-native AI company’s biggest concern was capacity with growing demand. Wonder was built on “the assumption” (which turned out to be incorrect) that there would be “unlimited capacity” so they could move “super fast” and not have to worry about managing infrastructure, Chen noted. But the company has grown quite a bit in recent years, he said; As a result, about six months ago we started getting “little signals from the cloud providers: ‘Hey, maybe you should consider moving to region two,'” as they were running out of CPU or data storage capacity in their facilities as demand grew. It was “very shocking” that they had to move to Plan B sooner than expected. “It’s obviously good practice to have multiple regions, but we were thinking maybe two years down the line,” Chen says.

What is not (yet) economically feasible

Wonder built its own model to maximize conversion rates, Chen noted; the aim is to bring new restaurants to the attention of relevant customers as much as possible. These are “isolated scenarios” where models are trained over time to be “very, very efficient and very fast.” Currently, large models are the best choice for Wonder’s use case, Chen noted. But in the long term, they’d like to move to small models that are hyper-customized to individuals (via AI agents or concierges) based on their purchase history and even their clickstream. “Having these micro models is definitely the best, but right now the cost is very expensive,” Chen noted. “If you try to make one for every person, it’s not economically feasible.”

Budgeting is an art, not a science

Wonder gives its developers and data scientists as much latitude as possible to experiment, and internal teams review usage costs to make sure no one has turned on a model and built up “massive computing power around a massive account,” Chen said. The company is trying several things to offload to AI and operate within margins. “But then it’s very difficult to budget because you have no idea,” he said. One of the challenging things is the pace of development; when a new model comes out, “we can’t just sit there, right? We have to use it.” Budgeting for the unknown economics of a token-based system is “definitely art versus science.” A critical part of the software development lifecycle is maintaining context when using large native models, he explains. When you find something that works, you can add it to your company’s “context corpus,” which can be sent with each request. That’s big and it costs money every time. “More than 50%, up to 80% of your costs are resending the same information to the same engine with every request,” Chen said.

In theory, the more they do, the less cost per unit would be required. “I know that when a transaction occurs, I pay a tax of X cents on each transaction, but I don’t want to be limited to using the technology for all these other creative ideas.”

The ‘justification moment’ for Recursion

Recursion, in turn, has focused on meeting broad computing needs through a hybrid infrastructure of on-premise clusters and cloud inference. When the company initially wanted to build out its AI infrastructure, it had to go with its own setup because “the cloud providers didn’t have many good offerings,” explains CTO Ben Mabey. “The moment of justification was we needed more computing power and we looked at the cloud providers and they said, ‘Maybe in a year or so.’” The company’s first cluster in 2017 included Nvidia gaming GPUs (1080s, launched in 2016); They have since added Nvidia H100s and A100s and are using a Kubernetes cluster that they run in the cloud or on-premises. Addressing the issue of longevity, Mabey noted: “These gaming GPUs are still in use today, which is insane, right? The myth that a GPU’s lifespan is only three years is absolutely not the case. A100s are still at the top of the list, they are the workhorse of the industry.”

Best use cases on-premises vs. cloud; costs vary

More recently, Mabey’s team trained a base model on Recursion’s image repository (which consists of petabytes of data and more than 200 images). These and other types of large training tasks required a “massive cluster” and connected setups with multiple nodes. “When we need that fully connected network and access to a lot of our data in a highly parallel file system, we go on-prem,” he explains. On the other hand, shorter workloads are run in the cloud. Recursion’s method is to “get ahead” of GPUs and Google tensor processing units (TPUs), which is the process of pausing active GPU tasks to work on higher priority tasks. “Because we don’t care about the speed of some of these inference workloads where we’re uploading biological data, whether that’s an image or sequence data, DNA data,” Mabey explains. “We can say, ‘Give us this in an hour,’ and we’re OK with it if it kills the job.” From a cost perspective, moving large workloads to the site is “conservatively” ten times cheaper, Mabey noted; for a TCO of five years this is half the costs. On the other hand, for smaller storage needs, the cloud can be “quite competitive” cost-wise. Ultimately, Mabey urged technology leaders to take a step back and determine whether they are truly willing to commit to AI; cost-effective solutions typically require buy-in over several years. “From a psychological perspective, I have seen colleagues of ours who do not want to invest in computers, and as a result they always pay on demand,” says Mabey. “Their teams use a lot less computing power because they don’t want to run up the cloud bill. Innovation is really hampered because people don’t want to burn money.”