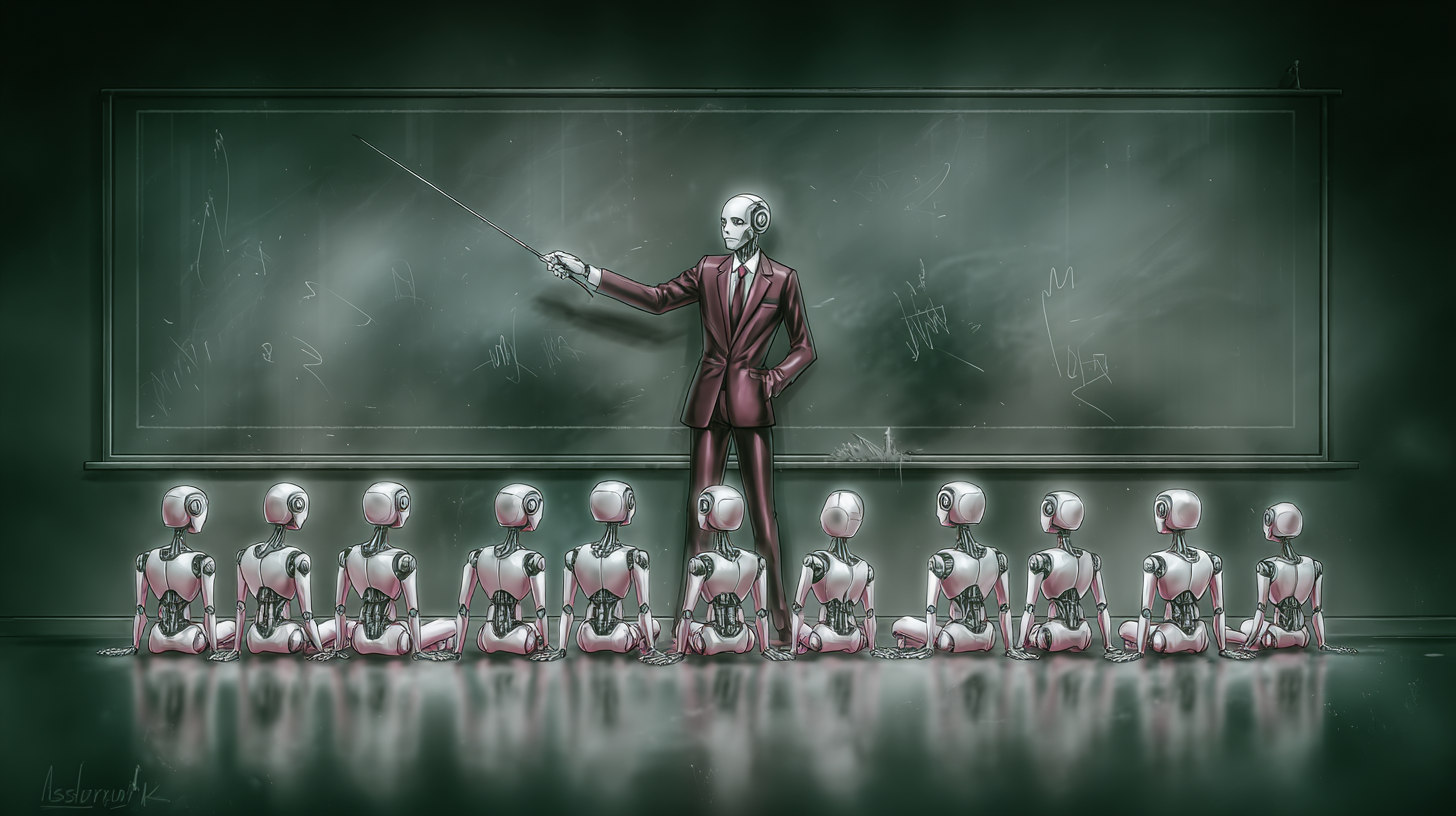

The teacher is the new engineer: Inside the rise of AI enablement and PromptOps

As more and more companies quickly adopt gen AI, it’s important to avoid a big mistake that could impact its effectiveness: proper onboarding. Companies spend time and money training new human employees to succeed, but when they use large language model (LLM) helpers, many treat them as simple tools that require no explanation.

This is not only a waste of resources; it’s risky. Research shows that AI has rapidly evolved from testing to actual use in 2024 to 2025 almost a third of companies reports a sharp increase in usage and adoption over the previous year.

Probabilistic systems need governance, not wishful thinking

Unlike traditional software, gen AI is probabilistic and adaptive. It learns from interaction, can deviate if data or use changes and operates in the gray zone between automation and agency. Treating it as if static software ignores reality: without monitoring and updates, models degrade and produce erroneous results: a phenomenon commonly known as model deviation. Gen AI is also not built-in organizational intelligence. A model trained on internet data might write a Shakespearean sonnet, but it won’t know your escalation paths and compliance constraints unless you teach it. Regulators and standards bodies have begun pushing for guidelines precisely because these systems behave dynamically and can hallucinate, deceive or leak data if this is not checked.

The true cost of skipping onboarding

When LLMs hallucinate, misinterpret tone, leak sensitive information, or reinforce biases, the costs are tangible.

-

Wrong information and liability: A Canadian tribunal subsequently held Air Canada liable the chatbot on its website gave a passenger incorrect policy information. The ruling made clear that companies remain responsible for the statements of their AI agents.

-

Embarrassing Hallucinations: In 2025, a syndicated “summer reading list‘worn by the Chicago Sun Times And Philadelphia researcher recommended books that didn’t exist; the writer had used AI without adequate verification, leading to retractions and dismissals.

-

Scale bias: First, the Equal Employment Opportunity Commission (EEOCs). AI discrimination settlement involved a hiring algorithm that automatically rejected older applicants, underscoring how unmonitored systems can increase bias and create legal risks.

-

Data leaks: After employees pasted sensitive code into ChatGPT, Samsung temporarily banned Public-generation AI tools on corporate devices – an avoidable misstep with better policies and training.

The message is simple: unintegrated AI and unregulated use cause legal, security and reputational damage.

Treat AI agents like new employees

Companies must onboard AI agents as deliberately as they do humans – with job descriptions, training curricula, feedback loops, and performance reviews. This is a cross-functional effort spanning data science, security, compliance, design, HR, and the end users who will interact with the system on a daily basis.

-

Role definition. Describe the scope, input/output, escalation paths and acceptable failure modes. For example, a legal co-pilot can summarize contracts and highlight risky clauses, but should avoid definitive legal rulings and escalate peripheral issues.

-

Contextual training. Refinement is in order, but for many teams, Retrieval-Augmented Generation (RAG) and tool adapters are safer, cheaper and more controllable. RAG keeps models based on your latest, verified knowledge (documents, policies, knowledge bases), reducing hallucinations and improving traceability. Emerging Model Context Protocol (MCP) integrations make it easier to connect copilots to enterprise systems in a controlled manner – bridging models with tools and data while maintaining separation of concerns. from Salesforce Einstein trust layer illustrates how vendors are formalizing secure grounding, masking, and audit controls for enterprise AI.

-

Pre-production simulation. Don’t let your AI’s initial “training” take place with real customers. Build high-fidelity sandboxes and stress test for tone, reasoning, and edge cases, then evaluate with human raters. Morgan Stanley has developed an evaluation regime for this GPT-4 assistantwith advisors and rapid engineers reviewing answers and refining directions before rolling them out widely. The result: >98% adoption among consultant teams once quality thresholds were met. Suppliers are also moving to simulation: Salesforce recently highlighted this digital twin testing to train officers safely against realistic scenarios.

-

4) Cross-functional mentorship. Treat early use like one two-way learning loop: Domain experts and first-line users provide feedback on tone, accuracy, and usability; security and compliance teams enforce boundaries and red lines; designers shape frictionless user interfaces that encourage correct usage.

Feedback loops and performance reviews – forever

Onboarding doesn’t end with the go-live. The most meaningful learning begins after stake.

-

Monitoring and observability: Record output, track KPIs (accuracy, satisfaction, escalation rates) and watch for degradation. Cloud providers are now providing observability/evaluation tools to help teams detect anomalies and regressions in production, especially for RAG systems where knowledge changes over time.

-

User feedback channels. Provide markups in the product and structured review queues so people can coach the model. Then close the loop by feeding these signals into prompts, RAG sources, or fine-tuning sets.

-

Regular audits. Schedule reconciliation controls, factual audits and safety reviews. Microsoft’s enterprise AI playbooksFor example, emphasize governance and phased implementations with executive visibility and clear guardrails.

-

Succession planning for models. As laws, products, and models evolve, you can plan upgrades and retirements the way you would plan people’s transitions: run overlap tests and transfer institutional knowledge (prompts, assessment sets, retrieval resources).

Why this is urgent now

Gen AI is no longer an “innovation shelf” project – it is embedded in CRMs, support desks, analytics pipelines, and execution workflows. Banks want Morgan Stanley and Bank of America focus AI on internal copilot use cases to increase employee efficiency while mitigating customer-facing risks, an approach that relies on structured onboarding and careful scoping. Meanwhile, security leaders say gene AI is everywhere a third of users have not implemented basic risk mitigationsa gap that invites shadow AI and data exposure.

The AI-native workforce also expects better: transparency, traceability, and the ability to shape the tools they use. Organizations that provide this – through training, clear UX capabilities and responsive product teams – see faster adoption and fewer fixes. When users trust a copilot, they can usage It; if they don’t, they bypass it.

As onboarding matures, this is what you can expect AI support managers And PromptOps specialists in more org charts, managing prompts, managing retrieval resources, running assessment suites, and coordinating cross-functional updates. Microsoft’s internal rollout of Copilot points to this operational discipline: Centers of Excellence, governance templates and implementation playbooks that are ready for managers. These practitioners are the “teachers” who ensure AI remains aligned with rapidly changing business goals.

A practical onboarding checklist

If you’re introducing (or rescuing) a corporate copilot, start here:

-

Write the job description. Scope, entrances/exits, tone, red lines, escalation rules.

-

Ground the model. Implement RAG (and/or MCP style adapters) to connect to authoritative, access control resources; whenever possible, prefer dynamic grounding to broad tuning.

-

Build the simulator. Create scripted and posted scenarios; measures accuracy, coverage, tone, security; require human signatures to complete the stages.

-

Ship with railings. DLP, data masking, content filters and audit trails (see vendor trust layers and responsible AI standards).

-

Instrument feedback. In-product markup, analytics and dashboards; weekly triage plans.

-

Assess and retrain. Monthly reconciliation checks, quarterly factual audits and planned model upgrades – with side-by-side A/Bs to prevent regressions.

In a future where every employee has an AI teammate, those organizations that take onboarding seriously will move faster, more securely, and more purposefully. Gen AI doesn’t just need data or computing power; it needs guidance, goals and growth plans. Treating AI systems as learnable, improveable, and responsible team members turns hype into ordinary value.

Dhyey Mavani accelerates generative AI on LinkedIn.