The Reinforcement Gap — or why some AI skills improve faster than others

AI coding tools get better quickly. If you do not work in code, it can be difficult to note how many things change, but GPT-5 and Gemini 2.5 have made a completely new set of developer tricks possible to automate, and last week Sonnet 4.5 did it again.

At the same time, other skills will progress more slowly. If you use AI to write e -mails, you will probably get the same value that you did a year ago. Even when the model gets better, the product does not always benefit – especially when the product is a chatbot that at the same time does a dozen different tasks. AI is still making progress, but it is not as evenly distributed as before.

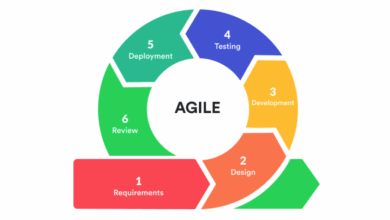

The difference in progress is easier than it seems. Coding apps benefit from billions of easily measurable tests that they can train to produce workable code. This is reinforcement (RL), perhaps the largest engine of AI preliminary output in the past six months and always becomes more complicated. You can learn reinforcement with human classrooms, but it works best if there is a clear pass-fail metriek, so you can repeat billions of times without stopping for human input.

Since the industry is increasingly dependent on learning to improve products, we see a real difference between possibilities that can be assessed automatically and those who cannot. RL-friendly skills such as bug fixing and competitive maths quickly get better, while skills such as writing only make incremental progress.

In short, there is a reinforcement gap – and it will be one of the most important factors for what AI systems can and cannot do.

In some respects, software development is the perfect subject for learning reinforcement. Even before AI there was a whole subdiscipline dedicated to test how software would be under pressure-held-green because developers had to ensure that their code would not break before they implemented it. So even the most elegant code must still go through unity tests, integration tests, security tests, and so on. Human developers use these tests routinely to validate their code and, as the senior director of Google for DEV Tools recently told me, they are just as useful for validating code -generated code. Even more than that, they are useful for learning reinforcement, because they are already systematized and repeatable on a massive scale.

There is no easy way to validate a well-written e-mail or a good chatbot reaction; These skills are inherently subjective and more difficult to measure by scale. But not every task is neatly in categories “easy to test” or “difficult to test”. We do not have an out-of-the-box test kit for quarterly finance reports or actuarial science, but a well-capitalized accounting startup can probably build it all over again. Some test kits will of course work better than others, and some companies will be smarter about how to approach the problem. But the testability of the underlying process will be the decisive factor in the question of whether the underlying process can be made into a functional product instead of just an exciting demo.

WAN event

San Francisco

|

27-29 October 2025

Some processes turn out to be more testable than you might think. If you had asked me last week, I would have placed AI-generated video in the category “Hard to Test”, but the enormous progress made by the new Sora 2 model of OpenAi shows that it might not be as difficult as it seems. In Sora 2, objects no longer appear and disappear from nowhere. Faces hold their shape and look like a specific person instead of just a collection of functions. Sora 2 images respect the laws of nature in both obvious And subtle ways. I suspect that if you peeked behind the curtain, you would find a robust learning system for reinforcement reinforcement for each of these qualities. Together, they make the difference between photoralism and an entertaining hallucination.

To be clear, this is not a hard and fast rule of artificial intelligence. It is a result of learning the central role that reinforcing in AI development, which can easily change as models develop. But as long as RL is the primary tool to market AI products, the reinforcement gap will only increase – with serious implications for both startups and the economy in general. If a trial ends up on the right side of the reinforcement gap, startups will probably succeed in automating it – and anyone who does that work can now be looking for a new career. For example, the question of which healthcare services can be trained has huge implications for the form of the economy in the next 20 years. And if surprises such as Sora 2 are an indication, we may not have to wait long for an answer.