Runway releases its first world model, adds native audio to latest video model

The race to release global models is on as AI image and video generation company Runway joins a growing number of startups and Big Tech companies in launching the first. The model, called GWM-1, works via frame-by-frame prediction, creating a simulation with insights into physics and how the world actually behaves over time, the company said.

A world model is an AI system that learns an internal simulation of how the world works so it can reason, plan, and act without needing to be trained in every possible real-life scenario.

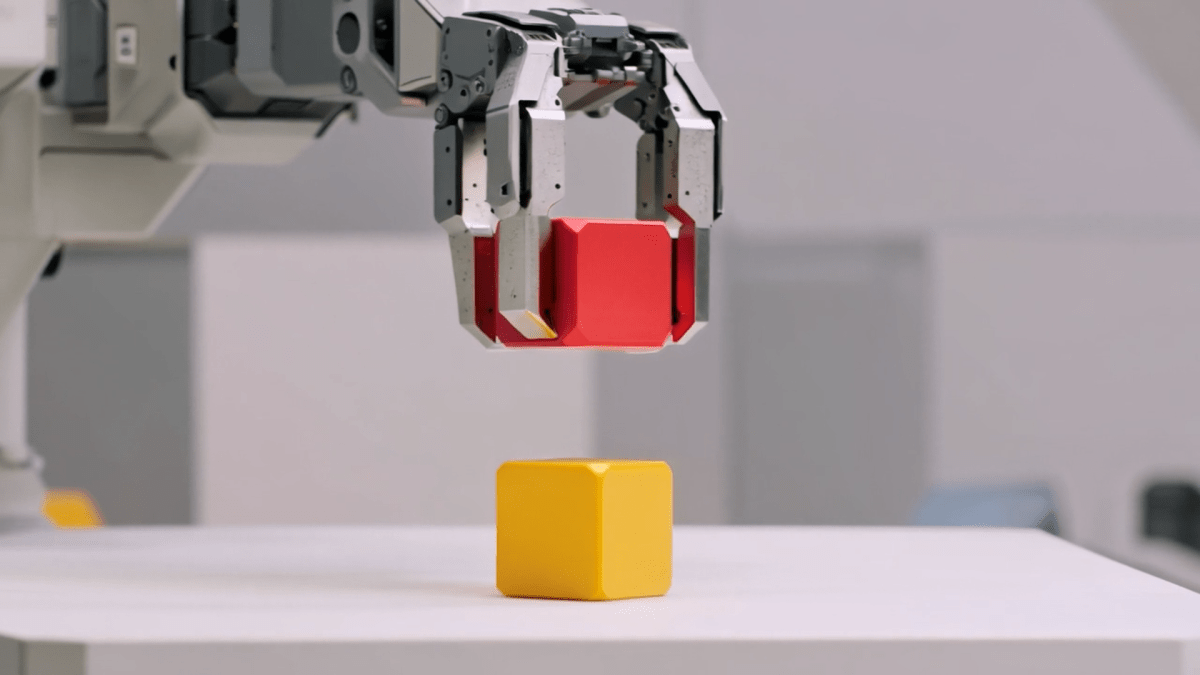

Runway, which launched earlier this month Gen 4.5 video model which surpassed both Google and OpenAI on the Video Arena leaderboard, said the GWM-1 world model is “more general” than Google’s Genie-3 and other competitors. The company presents it as a model that can create simulations to train agents in various domains, such as robotics and life sciences.

“To build a world model, we first had to build a really great video model. We believe that the right path to building a world model is teaching models to predict pixels directly, which is the best way to achieve general-purpose simulation. At enough scale and with the right data, you can build a model that has enough insight into how the world works,” the company’s CTO, Anastasis Germanidis, said during the livestream.

Runway has released specific variants or versions for the new world model called GWM-Worlds, GWM-Robotics and GWM-Avatars.

GWM-Worlds is a model app that allows you to create an interactive project. Users can set a scene via a prompt or an image reference, and as you explore the space the model generates the world with insights into geometry, physics and lighting. The company said the simulation runs at 24 fps and 720p resolution. Runway said that while Worlds could be useful for gaming, it is also well-positioned for teaching agents how to navigate and behave in the physical world.

With GWM-Robotics, the company wants to use synthetic data, enriched with new parameters such as changing weather conditions or obstacles. Runway says this method can also reveal when and how robots can violate policies and instructions in different scenarios.

WAN event

San Francisco

|

October 13-15, 2026

Runway also builds realistic avatars under GWM-Avatars to simulate human behavior. Companies like D-ID, Synthesia, Soul Machines, and even Google have been working to create human avatars that look real and work in areas like communications and training.

The company noted that worlds, robotics and avatars are technically separate models, but eventually it plans to merge them all into one model.

In addition to releasing a new global model, the company is also updating its fundamentals Gen 4.5 model released earlier this month. The new update brings native audio and long-form, multi-shot generation capabilities to the model. The company said this model will allow users to generate one-minute videos with character consistency, native dialogue, background audio and complex shots from different angles. The company said you can also edit existing audio and add dialogue. Moreover, you can edit multi-shot videos of any length.

The Gen 4.5 update brings Runway closer to competitor Kling’s all-in-one video suite, which was also launched earlier this monthespecially around native audio and multi-shot storytelling. It also indicates that video generation models are evolving from prototype to production-ready tools. Runway’s updated Gen 4.5 model is available to all paid subscription users.

The company said it will make GWM-Robotics available through an SDK. It added that it is in active discussions with several robotics companies and enterprises about the use of GWM-Robotics and GWM-Avatars.