Nvidia is quietly building a multibillion-dollar behemoth to rival its chips business

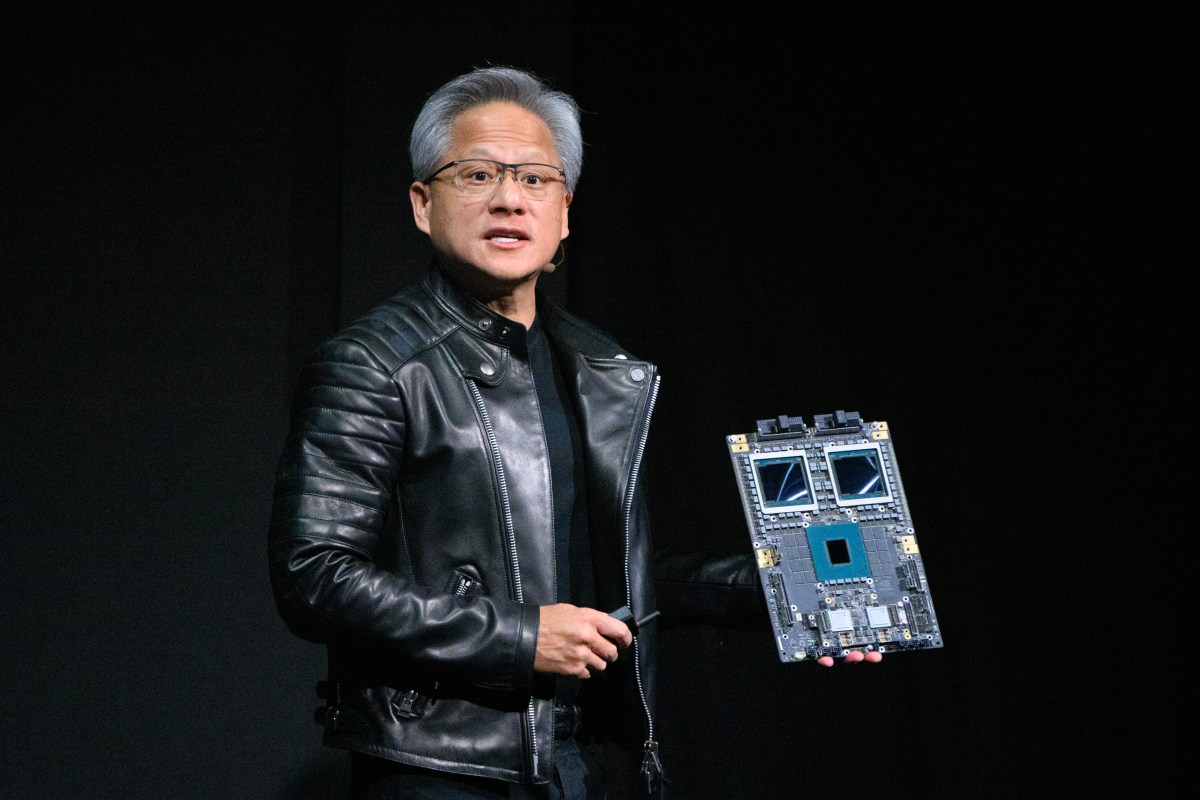

Nvidia CEO Jensen Huang was years ahead of the market when he pushed the company to start tinkering with building AI-specific chips in 2010, more than a decade before the current buzz around AI. A similar move in 2020 – doubling down on data center networks with a strategic acquisition – has created one of the company’s most lucrative and fast-growing divisions, but with little fanfare.

In just a few years, Nvidia’s networking business, designed to connect data centers, has become the company’s second-largest revenue generator after compute. Last quarter, the company reported revenue of $11 billion, a year-over-year increase of 267%, and raked in more than $31 billion for the full year, according to Nvidia’s .

Driven by the growth in AI processing, the division includes technologies such as NVLink, which enables communication between GPUs in a data center rack, Nvidia InfiniBand Switches, an in-network computing platform, the Ethernet platform for AI networking and co-packaged optical switches, among others.

Together, Nvidia’s networking operations include all the technology needed to build an “AI factory,” a data center designed for training AI models.

Kevin Cook, a senior equity strategist at Zacks Investment Research, told TechCrunch that Nvidia’s networking business is one of the company’s most impressive new segments. “[Nvidia’s networking business] reports $11 billion for the quarter; That number is larger than Cisco’s networking business, almost as large as full-year estimates,” Cook said, adding that it does in a quarter what Cisco’s business does in a year.

And yet the enterprise segment doesn’t attract the same attention as the company’s chip business, which is significantly larger. It also doesn’t get as much buzz as the company’s gaming operations; it’s an original bread-and-butter business, almost three times smaller.

The origins of Nvidia’s networking business come from Mellanox, a networking company founded in Israel in 1999 that Nvidia acquired for $7 billion in 2020.

WAN event

San Francisco, CA

|

October 13-15, 2026

Kevin Deierling is senior vice president of networking at Nvidia and joined the company through the acquisition of Mellanox. Deierling told TechCrunch that people not aware of Nvidia’s networking business could be his fault for marketing it poorly, but he doesn’t like that answer.

“People think of networking as, ‘I have a printer and I need to connect to it,’” Deierling says. “Jensen said this on the first day he took over: he said the data center is the new computing unit. Networking is much more than just moving smaller amounts of data between a computing node, it’s actually a foundation.”

Although Deierling said he didn’t really understand why Huang bought the company when he did, he gets it now. By having a networking business in addition to the GPU business, the company can sell its chips with the technology they work best with.

“When Jensen bought Mellanox in 2020, he saw it was the missing piece to making GPUs a complete package,” said Cook, the Zacks analyst.

Deierling added that he thinks another aspect of the success of Nvidia Networks is that they only sell the technology as a full-stack solution, as opposed to individual components, and that they do not sell the technology themselves, but through their partners.

“I can’t think of any other companies that have that [the] full-stack capabilities that we have,” said Deierling. “We are really different. We build the entire compute stack, a fully integrated stack, and then go to market through all our partners.”

Nvidia just announced a whole new range updates to its network system during Huang’s March 16 keynote address at the company’s annual Nvidia GTC technology conference. The company launched the Nvidia Rubin platform, which includes six new chips to power an “AI supercomputer.” Nvidia also announced a new Nvidia Inference Context Memory Storage platform and more efficient Nvidia Spectrum-X Ethernet Photonics switches, among other things.

“It’s no longer a peripheral to connect the printer, but another slow I/O device,” Deierling said of networking. “It’s fundamental to the computer. We used to have what was called the back of the computer. Today, the network is the back of the AI factory, and it’s super important.”