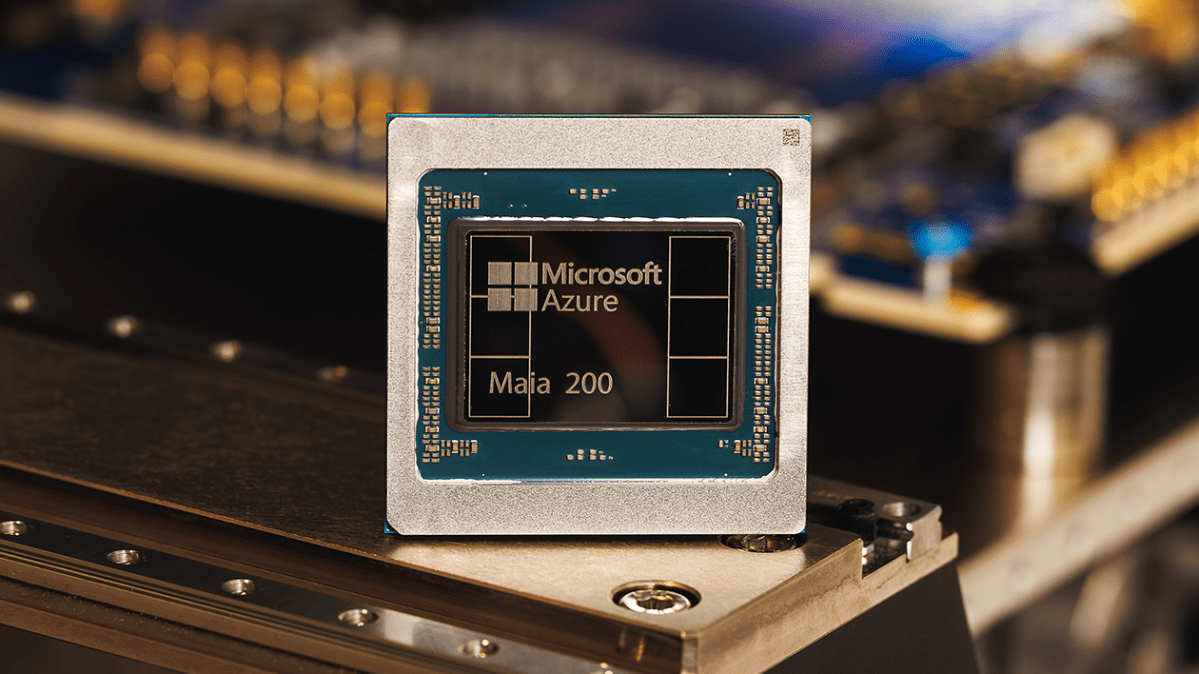

Microsoft announces powerful new chip for AI inference

Microsoft has announced the launch of its latest chip, the Maia 200, which the company describes as a silicon workhorse designed for scaling AI inference.

The 200, which follows the company’s Maia 100 released in 2023is technically equipped to run powerful AI models at faster speeds and with greater efficiency, the company said. Maia is equipped with more than 100 billion transistors, delivering more than 10 petaflops at 4-bit precision and approximately 5 petaflops at 8-bit speed – a significant increase over its predecessor.

Inference refers to the computational process of running a model, as opposed to the computing power required to train it. As AI companies mature, inference costs have become an increasingly important part of their overall operating costs, leading to renewed interest in ways to optimize the process.

Microsoft hopes the Maia 200 can be part of that optimization, allowing AI companies to operate with less disruption and lower energy consumption. “In practice, a single Maia 200 node can effortlessly run today’s largest models, with plenty of headroom for even larger models in the future,” the company said.

Microsoft’s new chip is also part of a growing trend of tech giants turning to in-house designed chips as a way to reduce their dependence on NVIDIA, whose advanced GPUs have become increasingly important to the success of AI companies. For example, Google has its TPU, the tensor processing units, which are not sold as chips as computing power made accessible via the cloud. Then there’s Amazon Trainium, the e-commerce giant’s own AI accelerator chip has just launched the latest versionthe Trainium3, in December. In any case, the TPUs can be used to offload some of the computing power that would otherwise be allocated to NVIDIA GPUs, reducing overall hardware costs.

With Maia, Microsoft is positioning itself to compete with those alternatives. In its Monday press release, the company noted that Maia delivers three times the FP4 performance of third-generation Amazon Trainium chips, and FP8 performance better than Google’s seventh-generation TPU.

Microsoft says Maia is already hard at work feeding the company’s AI models from the Superintelligence team. It also supports the activities of Copilot, the chatbot. As of Monday, the company said it has invited a variety of parties – including developers, academics and groundbreaking AI labs – to use the Maia 200 software development kit in their workloads.

WAN event

San Francisco

|

October 13-15, 2026