Inception raises $50 million to build diffusion models for code and text

With so much money flowing into AI startups, it’s a good time to be an AI researcher with an idea to test out. And if the idea is new enough, it might be easier to get the resources you need as an independent company rather than within one of the big labs.

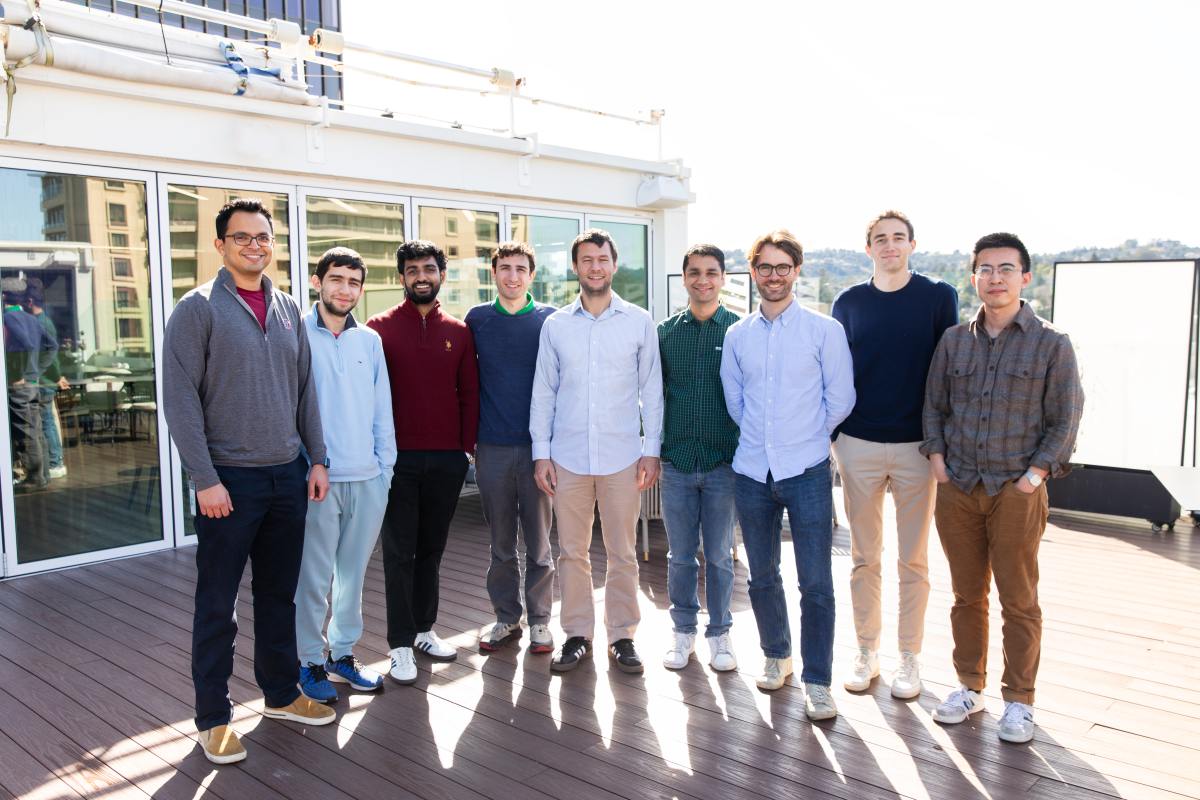

That’s the story of Inception, a startup developing diffusion-based AI models that just raised $50 million in seed funding led by Menlo Ventures, with participation from Mayfield, Innovation Endeavors, Nvidia’s NVentures, Microsoft’s M12 fund, Snowflake Ventures, and Databricks Investment. Andrew Ng and Andrej Karpathy provided additional angel funding.

The leader of the project is Stanford professor Stefano Ermon, whose research focuses on diffusion models – which generate results through iterative refinement rather than word by word. These models support image-based AI systems such as Stable Diffusion, Midjourney and Sora. Ermon worked on these systems before the AI boom made them exciting, and uses Inception to apply the same models to a wider range of tasks.

Along with the funding, the company released a new version of its Mercury model designed for software development. Mercury is already integrated into a number of development tools, including ProxyAI, Buildglare and Kilo Code. Most importantly, Ermon says the diffusion approach will help Inception’s models save on two of the most important metrics: latency (response time) and computational costs.

“These diffusion-based LLMs are much faster and much more efficient than what anyone is building today,” says Ermon. “It’s just a very different approach that can still bring a lot of innovation to the table.”

Understanding the technical difference requires some background information. Diffusion models differ structurally from autoregression models, which dominate text-based AI services. Auto-regression models such as GPT-5 and Gemini work sequentially and predict each subsequent word or word fragment based on the previously processed material. Diffusion models trained for image generation take a more holistic approach, where the overall structure of a reaction is incrementally changed until it matches the desired outcome.

The conventional wisdom is to use autoregression models for text applications, and that approach has been wildly successful for recent generations of AI models. But a growing body of research suggests that diffusion models can perform better if a model is too processing large amounts of text or managing data restrictions. As Ermon tells it, these qualities become a real advantage when performing operations on large codebases.

WAN event

San Francisco

|

October 13-15, 2026

Diffusion models also have more flexibility in how they use hardware, an especially important advantage as the infrastructure requirements of AI become apparent. While auto-regression models must perform operations one after the other, diffusion models can process many operations simultaneously, allowing significantly lower latency on complex tasks.

“We’re benchmarked at over 1,000 tokens per second, which is way higher than anything possible using existing autoregressive technologies,” says Ermon, “because our thing is built to be parallel. It’s built to be very, very fast.”