IBM's open source Granite 4.0 Nano AI models are small enough to run locally directly in your browser

In an industry where model size is often seen as a measure of intelligence, IBM is charting a different course: one that values value efficiency over enormityAnd accessibility over abstraction.

The 114-year-old technology giant four new Granite 4.0 Nano modelsreleased today, range from just 350 million to 1.5 billion parameters, a fraction of the size of their server-side cousins from the likes of OpenAI, Anthropic, and Google.

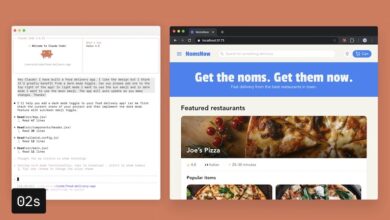

These models are designed to be highly accessible: the 350M variants can comfortably run on a modern laptop CPU with 8-16 GB of RAM, while the 1.5B models typically require a GPU with at least 6-8 GB of VRAM for smooth performance – or enough system RAM and swap for CPU-only inference. This makes them well suited for developers building applications on consumer hardware or at the edge, without relying on cloud computing.

In fact, the smallest ones can even run locally in your own web browser, as Joshua Lochner is also known Xenovacreator of Transformer.js and machine learning engineer at Hugging Face, wrote on the social network X.

All Granite 4.0 Nano models are released under the Apache 2.0 license — perfect for use by researchers and corporate or indie developers, even for commercial use.

They are natively compatible with llama.cpp, vLLM and MLX and are certified under ISO 42001 for responsible AI development – a standard that IBM helped pioneer.

But in this case, small doesn’t mean less capable; it could just mean smarter design.

These compact models are not built for data centers, but for edge devices, laptops and local inference, where computing power is scarce and latency matters.

And despite their small size, the Nano models show benchmark results that match or even exceed the performance of larger models in the same category.

The release signals that a new AI frontier is rapidly emerging – one dominated not by pure scale, but by strategic scaling up.

What exactly has IBM released?

The Granite 4.0 nano family includes four open source models now available Hugging face:

-

Granite-4.0-H-1B (~1.5B parameters) – Hybrid-SSM architecture

-

Granite-4.0-H-350M (~350M parameters) – Hybrid-SSM architecture

-

Granite-4.0-1B – Transformer-based variant, parameter count closer to 2B

-

Granite-4.0-350M – Transformer based variant

The H-series models – Granite-4.0-H-1B and H-350M – use a hybrid state space architecture (SSM) that combines efficiency with powerful performance, ideal for low-latency edge environments.

Meanwhile, the standard transformer variants – Granite-4.0-1B and 350M – offer broader compatibility with tools like llama.cpp, designed for use cases where hybrid architecture is not yet supported.

In practice, the transformer 1B model is closer to the 2B parameters, but matches its hybrid sibling in performance, giving developers flexibility based on their runtime constraints.

“The hybrid variant is a true 1B model. However, the non-hybrid variant is closer to 2B, but we chose to keep the naming aligned with the hybrid variant to make the connection easily visible,” explains Emma, Product Marketing Lead for Granite, during a Reddit “Ask Me Anything” (AMA) session on r/LocalLLaMA.

A competitive class of small models

IBM is entering a crowded and rapidly evolving small language model (SLM) market, competing with offerings like Qwen3, Google’s Gemma, LiquidAI’s LFM2, and even Mistral’s compact models in the sub-2B parameter space.

While OpenAI and Anthropic focus on models that require clusters of GPUs and advanced inference optimization, IBM’s Nano family is aimed squarely at developers who want to run high-performance LLMs on local or limited hardware.

In benchmark testing, IBM’s new models consistently top the charts in their class. According to data shared on X by David Cox, VP AI Models at IBM Research:

-

On IFEval (next instruction), Granite-4.0-H-1B scored 78.5, better than Qwen3-1.7B (73.1) and other 1–2B models.

-

On BFCLv3 (function/tool call), Granite-4.0-1B led with a score of 54.8, the highest in its size class.

-

On safety benchmarks (SALAD and AttaQ), the Granite models scored over 90%, surpassing similarly sized competitors.

Overall, the Granite-4.0-1B achieved a leading average benchmark score of 68.3% in general knowledge, math, code, and security.

This performance is especially important given the hardware limitations these models are designed for.

They require less memory, run faster on CPUs or mobile devices, and don’t require cloud infrastructure or GPU acceleration to deliver useful results.

Why model size still matters, but not like it used to

In the early wave of LLMs, bigger meant better: more parameters translated into better generalization, deeper reasoning, and richer results.

But as transformer research matured, it became clear that architecture, training quality, and task-specific tuning could allow smaller models to punch well above their weight class.

IBM is counting on this evolution. By releasing open, small models competitive in real-world tasksthe company offers an alternative to the monolithic AI APIs that dominate today’s application stack.

In fact, the Nano models meet three increasingly important needs:

-

Flexibility in implementation — they run everywhere from mobile to microservers.

-

Inference privacy – users can keep data local without having to rely on cloud APIs.

-

Openness and verifiability — source code and model weights are publicly available under an open license.

Community response and roadmap signals

IBM’s Granite team didn’t just launch the models and run, they ran with them Reddit’s open source community r/LocalLLaMA to contact developers directly.

In an AMA-style thread, Emma (Product Marketing, Granite) answered technical questions, discussed concerns about naming conventions, and dropped hints about the future.

Notable confirmations from the thread:

-

A larger Granite 4.0 model is currently in training

-

Reasoning-oriented models (“thinking counterparts”) are in the pipeline

-

IBM will soon release refinement recipes and a full training document

-

More tooling and platform compatibility are on the roadmap

Users responded enthusiastically to the models’ capabilities, especially in the areas of following instructions and structured response tasks. One commenter summed it up:

“This is big if true for a 1B model – if the quality is good and produces consistent results. Function calling tasks, multi-language dialogs, FIM completions… this could be a real workhorse.”

Another user commented:

“The Granite Tiny is already my favorite for web searching in LM Studio – better than some Qwen models. I was tempted to give Nano a try.”

Background: IBM Granite and the Enterprise AI Race

IBM’s move into large language models began in earnest in late 2023 with the debut of the Granite Foundation model family, starting with models like Granite.13b.instruct And Granite.13b.chat. These initial decoder-only models, released for use within the Watsonx platform, demonstrated IBM’s ambition to build enterprise-grade AI systems that prioritize transparency, efficiency and performance. The company open-sourced select Granite code models under the Apache 2.0 license in mid-2024, laying the foundation for broader developer adoption and experimentation.

The real turning point came with Granite 3.0 in October 2024 – a fully open-source package with general-purpose and domain-specialized models ranging from 1B to 8B parameters. These models emphasized efficiency over brute scale and offered capabilities such as longer context windows, instruction tuning, and integrated guardrails. IBM positioned Granite 3.0 as a direct competitor to Meta’s Llama, Alibaba’s Qwen and Google’s Gemma, but with a unique business-oriented lens. Later versions, including Granite 3.1 and Granite 3.2, introduced even more enterprise-friendly innovations: embedded hallucination detection, time series forecasting, document vision models, and conditional reasoning switches.

Launching in October 2025, the Granite 4.0 family represents IBM’s most technically ambitious release to date. It introduces a hybrid architecture combining transformer and Mamba-2 layers, aiming to combine the contextual precision of attention mechanisms with the memory efficiency of state-space models. This design allows IBM to significantly reduce memory and latency costs for inference, making Granite models viable on smaller hardware, while still outperforming their peers in following instructions and calling functions. The launch also includes ISO 42001 certification, cryptographic model signing and distribution on platforms such as Hugging Face, Docker, LM Studio, Ollama and watsonx.ai.

Across all iterations, IBM’s focus was clear: building reliable, efficient, and legally unambiguous AI models for business use cases. With a permissive Apache 2.0 license, public benchmarks, and an emphasis on governance, the Granite Initiative not only responds to growing concerns about proprietary black-box models, but also offers a Western-oriented open alternative to the rapid advancement of teams like Alibaba’s Qwen. In doing so, Granite positions IBM as a leading voice in what could be the next phase of open-weight, production-ready AI.

A shift towards scalable efficiency

Ultimately, IBM’s release of Granite 4.0 Nano models reflects a strategic shift in LLM development: from chasing record numbers of parameters to optimizing usability, openness, and implementation scope.

By combining competitive performance, responsible development practices and deep involvement in the open source community, IBM is positioning Granite not just as a family of models, but as a platform for building the next generation of lightweight, reliable AI systems.

For developers and researchers looking for performance without overhead, the Nano release sends a compelling message: you don’t need 70 billion parameters to build something powerful, just the right ones.