I built marshmallow castles in Google’s new AI-world generator

Google DeepMind provides access to Project Genie, the AI tool for creating interactive game worlds based on text prompts or images.

Starting Thursday, Google AI Ultra subscribers in the US can play with the experimental research prototype, which is powered by a combination of Google’s latest world model Genie 3, the image generation model Nano Banana Pro and Gemini.

Five months after Genie 3’s research preview, the move is part of a broader effort to collect user feedback and training data as DeepMind races to develop more capable world models.

World models are AI systems that generate an internal representation of an environment and can be used to predict future outcomes and plan actions. Many AI leaders, including those at DeepMind, believe that world models are a crucial step in achieving artificial general intelligence (AGI). But in the shorter term, labs like DeepMind envision a go-to-market plan that starts with video games and other forms of entertainment and branches out to training embodied agents (aka robots) in simulation.

DeepMind’s release of Project Genie comes as the global modeling race begins to heat up. Fei-Fei Li’s World Labs released its first commercial product late last year, called Marble. Runway, the AI video generation startup, also recently launched a global model. And former Meta chief scientist Yann LeCun’s startup AMI Labs will also focus on models for developing countries.

“I think it’s exciting to be in a place where more people can access it and give us feedback,” Shlomi Fruchter, research director at DeepMind, told TechCrunch via video interview, smiling from ear to ear in obvious excitement about the release of Project Genie.

DeepMind researchers TechCrunch spoke to were candid about the experimental nature of the tool. It can be inconsistent, sometimes impressively generating playable worlds, and sometimes producing baffling results that miss the mark. Here’s how it works.

You start with a “world sketch” by providing text clues for both the environment and a main character, who you can later maneuver through the world in first- or third-person perspective. Nano Banana Pro creates an image based on the prompts that you can theoretically change before Genie uses the image as a starting point for an interactive world. The adjustments worked most of the time, but the model would occasionally stumble and give you purple hair when you asked for green.

You can also use real life photos as a basis for the world building model, which once again was a hit. (More on that later.)

Once you’re happy with the image, Project Genie will take a few seconds to create an explorable world. You can also remix existing worlds into new interpretations by building on their cues, or explore curated worlds in the gallery or via the randomizer tool for inspiration. You can then download videos of the world you just explored.

DeepMind currently only offers 60 seconds of world generation and navigation, partly due to budget and computing limitations. Because Genie 3 is an autoregressive modelit requires a lot of dedicated computing power, which puts a tight ceiling on how much DeepMind can offer to users.

“The reason we limited it to 60 seconds is because we wanted to bring it to more users,” Fruchter said. “When you use it, there is a chip somewhere that belongs only to you and is used specifically for your session.”

He added that extending the test duration beyond 60 seconds would reduce the added value of the tests.

“The environments are interesting, but at some point the dynamics of the environment are somewhat limited due to their level of interaction. Still, we see that as a limitation that we hope to improve.”

Whimsy works, realism doesn’t

When I used the model, the safety rails were already in use. I couldn’t generate anything that resembled nudity, nor could I generate worlds that even remotely sniffed at Disney or other copyrighted material. (In December, Disney hit Google with an injunction, accusing the company’s AI models of copyright infringement by, among other things, training Disney characters and IP and generating unauthorized content.) I couldn’t even get Genie to generate worlds of mermaids exploring underwater fantasy lands or ice queens in their wintry castles.

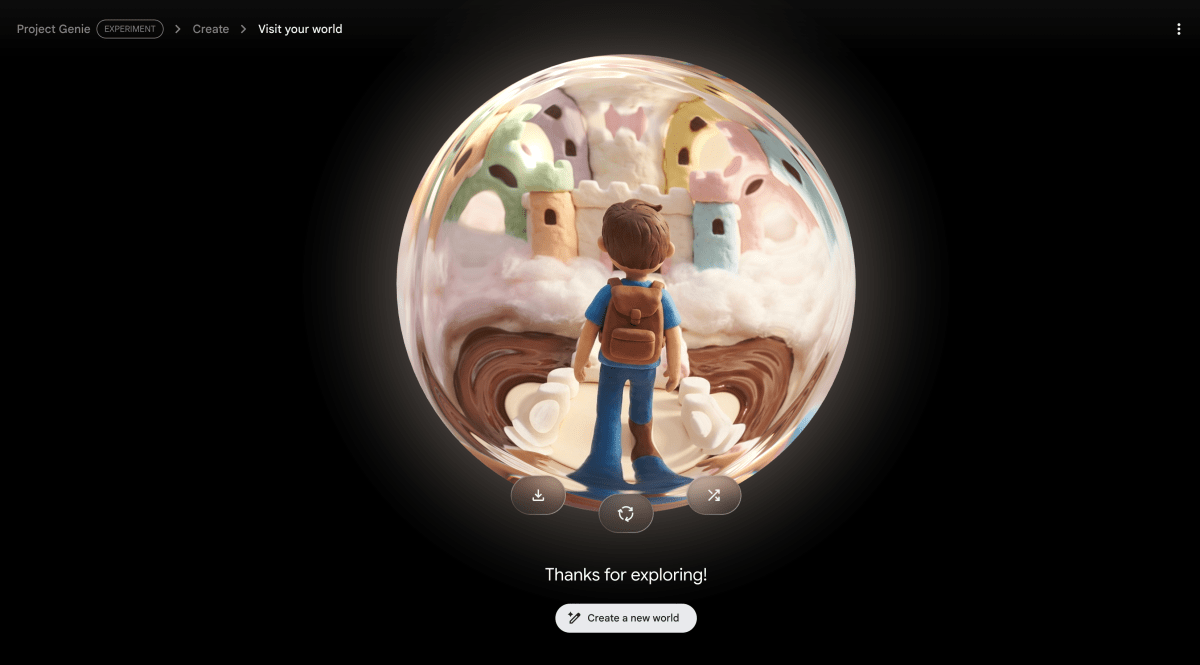

Still, the demo was very impressive. The first world I built was an attempt to realize a little childhood fantasy, in which I could explore a castle in the clouds, consisting of marshmallows with a river with chocolate sauce and trees made of candy. (Yes, I was a chubby kid.) I asked the model to do it claymation style, and it produced a whimsical world that I would have eaten up as a kid; The castle’s pastel-white colored spiers and turrets look puffy and tasty enough to tear off a piece and dunk it in the chocolate moat. (Video above.)

That said, Project Genie still has some kinks that need to be worked out.

The models excelled at creating worlds based on artistic cues, such as the use of watercolors, anime style or classic cartoon aesthetics. But it often backfired when it came to photorealistic or cinematic worlds, which often looked like a video game instead of real people in a real setting.

It also didn’t always respond well when given real photos to work with. When I gave him a photo of my office and asked him to create a world based on the photo, exactly as it was, I got a world with some of the same furnishings as my office – a wooden desk, plants, a gray sofa – arranged differently. And it looked sterile, digital and not lifelike.

When I gave him a photo of my desk with a stuffed animal, Project Genie animated the toy as it navigated through space, even occasionally making other objects react as it moved past them.

That interactivity is something DeepMind is working to improve. There were several occasions where my characters walked straight through walls or other solid objects.

When DeepMind initially released Genie 3, researchers highlighted how the model’s autoregressive architecture allowed it to remember what it had generated. So I wanted to test that by returning to parts of the environment it had already generated to see if it would be the same. For the most part, the model is successful. In one case I generated a cat exploring yet another desk, and only once, when I turned back to the right side of the desk, did the model generate a second mug.

The part I found most frustrating was the way you navigated the room using the arrows to look around, the space bar to jump or level up, and the WASD keys to move. I’m not a gamer, so this wasn’t obvious to me, but the keys were often unresponsive or sent you in the wrong direction. Trying to walk from one side of the room to a doorway on the other often became a chaotic zigzag exercise, like trying to steer a shopping cart with a broken wheel.

Fruchter assured me that his team was aware of these shortcomings, and reminded me again that Project Genie is an experimental prototype. In the future, the team hopes to increase realism and improve interaction capabilities, including giving users more control over actions and environments.

“We don’t think about it [Project Genie] as an end-to-end product that people can go back to every day, but we think there’s already a glimpse of something that’s interesting and unique and can’t be done any other way,” he said.