Google’s SIMA 2 agent uses Gemini to reason and act in virtual worlds

Google Deepmind shared on Thursday, a research preview of SIMA 2, the next generation of its generalist AI agent that integrates the language and reasoning powers of Gemini, Google’s grand language model, to go beyond just following instructions to understanding and interacting with its environment.

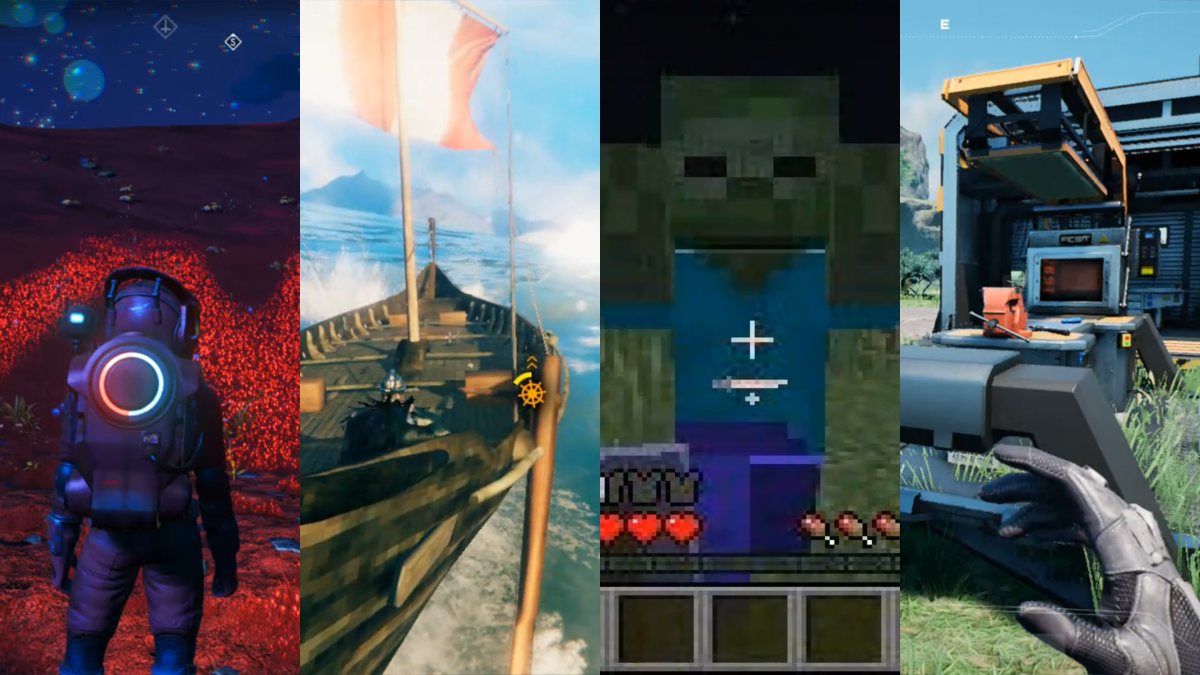

Like many of DeepMind’s projects, including AlphaFold, the first version of SIMA was trained on hundreds of hours of video game data to learn how to play multiple 3D games as a human, even some games it wasn’t trained for. SIMA 1, unveiled in March 2024, could follow basic instructions in a wide range of virtual environments, but only had a 31% success rate for completing complex tasks, compared to 71% for humans.

“SIMA 2 is a step change and improvement in capabilities over SIMA 1,” Joe Marino, senior research scientist at DeepMind, said at a press conference. “It’s a more general agent. It can perform complex tasks in previously unseen environments. And it’s a self-improving agent. So it can actually improve itself based on its own experience, which is a step toward more general robots and AGI systems in general.”

SIMA 2 is powered by the Gemini 2.5 flash-lite model, and AGI refers to artificial general intelligence, which DeepMind defines as a system capable of a wide range of intellectual tasks with the ability to learn new skills and generalize knowledge across different areas.

Working with so-called ’embodied agents’ is crucial for general intelligence, say DeepMind researchers. Marino explained that an embodied agent interacts with a physical or virtual world through a body – observing input and taking actions as a robot or human would – while a disembodied agent can interact with your calendar, take notes or run code.

Jane Wang, a senior staff researcher at DeepMind with a background in neuroscience, told TechCrunch that SIMA 2 goes well beyond gameplay.

“We’re asking it to actually understand what’s happening, understand what the user is asking it to do, and then be able to respond in a common-sense way, which is actually quite difficult,” Wang said.

WAN event

San Francisco

|

October 13-15, 2026

By integrating Gemini, SIMA 2 doubled the performance of its predecessor, uniting Gemini’s advanced language and reasoning abilities with the embodied skills developed through training.

Marino demonstrated SIMA 2 in ‘No Man’s Sky’, where the agent described its environment (a rocky planetary surface) and determined next steps by recognizing and interacting with a distress beacon. SIMA 2 also uses Gemini for internal reasoning. In another game, when asked to walk to the house that is the color of a ripe tomato, the agent showed what he was thinking: ripe tomatoes are red, therefore I should go to the red house. Then he found and approached it.

Being Gemini powered, this also means that SIMA 2 follows instructions based on emojis: “You order him 🪓🌲, and he will cut down a tree,” said Marino.

Marino also demonstrated how SIMA 2 can navigate newly generated photorealistic worlds produced by Genie, DeepMind’s world model, properly identifying and interacting with objects such as benches, trees and butterflies.

Gemini also enables self-improvement without much human data, Marino added. Where SIMA 1 was trained entirely on human gameplay, SIMA 2 uses it as a foundation to provide a strong initial model. When the team places the agent in a new environment, it asks a different Gemini model to create new tasks and a separate reward model to score the agent’s attempts. Using these self-generated experiences as training data, the agent learns from its own mistakes and gradually performs better, essentially teaching itself new behaviors through trial and error as a human would, guided by AI-based feedback rather than humans.

DeepMind sees SIMA 2 as a step toward unlocking more general-purpose robots.

“If we think about what a system needs to do to perform tasks in the real world, like a robot, I think there are two components to it,” Frederic Besse, senior research engineer at DeepMind, said at a press conference. “First, there is a high level of understanding of the real world and what needs to be done, as well as some reasoning.”

If you ask a humanoid robot in your house to go see how many cans of beans you have in the cupboard, the system has to understand all the different concepts – what beans are, what a cupboard is – and navigate to that location. Besse says SIMA 2 is more about those high-level behaviors than lower-level actions, which he refers to as controlling things like physical joints and wheels.

The team declined to share a specific timeline for implementing SIMA 2 in physical robotic systems. Besse told TechCrunch that DeepMind recently appeared revealed Basic robotics models – which can also reason about the physical world and create multi-step plans to complete a mission – were trained differently and separately from SIMA.

While there is also no timeline for releasing more than a preview of SIMA 2, Wang told TechCrunch that the goal is to show the world what DeepMind has been working on and see what types of collaborations and potential applications are possible.