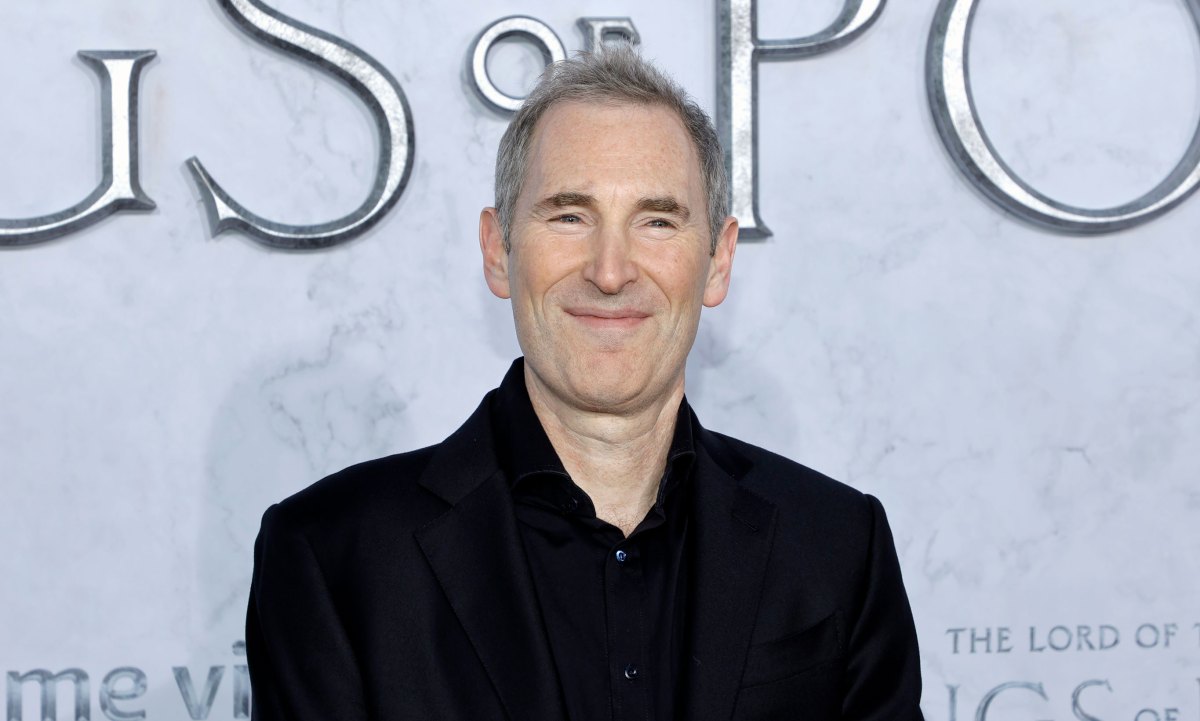

Andy Jassy says Amazon’s Nvidia competitor chip is already a multibillion-dollar business

Can any company, big or small, really topple Nvidia’s AI chip dominance? Maybe not. But there are hundreds of billions of dollars in revenue for those who can pocket even a portion of it for themselves, Amazon CEO Andy Jassy said this week.

As expected, at the AWS re:Invent conference, the company unveiled the next generation of its Nvidia rival AI chip, Trainium3, which is 4x faster yet consumes less power than the current Trainium2. Jassy revealed a few facts about the current Trainium in a message on X that shows why the company is so optimistic about the chip.

He said the Trainium2 business has “substantial traction, is a multi-billion dollar run-rate business, has more than 1 million chips in production and more than 100,000 companies use it today for the majority of Bedrock.”

Bedrock is Amazon’s AI app development tool that allows businesses to choose from many AI models.

Jassy said Amazon’s AI chip is winning among the company’s vast base of cloud customers because it has “price-performance advantages over other GPU options that are attractive.” In other words, he believes it works better and costs less than those “other GPUs” on the market.

That’s from Amazon, of course classic MOwhich offers its own homegrown technology at lower prices.

Additionally, AWS CEO Matt Garman offered even more insight into one interview with CRNabout one customer responsible for a large chunk of those billions in revenue: no shock here, it’s Anthropic.

WAN event

San Francisco

|

October 13-15, 2026

“We’ve seen tremendous traction from Trainium2, especially from our partners at Anthropic, who we announced Project Rainier, where more than 500,000 Trainium2 chips will help them build the next generations of models for Claude,” Garman said.

Project Rainier is Amazon’s most ambitious AI server cluster, spanning multiple data centers across the US and built to meet Anthropic’s skyrocketing needs. It came online in October. Amazon is of course one major investor in Anthropic. In return, Anthropic has made AWS its primary model training partner, even though Anthropic is now also offered on Microsoft’s cloud via Nvidia chips.

In addition to Microsoft’s cloud, OpenAI now also uses AWS. But the OpenAI partnership couldn’t have added much to Trainium’s revenue, because AWS runs it on Nvidia chips and systems. said the cloud giant.

Only a few American companies such as Google, Microsoft, Amazon and Meta have all the technical components: expertise in silicon chip design, homegrown high-speed connections. and networking technology – to even attempt real competition with Nvidia. (Remember, Nvidia cornered the market on an important, powerful networking technology in 2019 when CEO Jensen Huang outbid Intel and Microsoft to buy InfiniBand hardware maker Mellanox.)

Additionally, AI models and software built to be operated by Nvidia’s chips also rely on Nvidia’s own Compute Unified Device Architecture (CUDA) software. CUDA allows the apps to use the GPUs for parallel processing, among other things. Like yesterday’s Intel versus SPARC chip war, this is no small matter rewrite an AI app for a non-CUDA chip.

Yet Amazon may have a plan for that. As we previously reported, the next generation of its AI chip, Trainium4, will be built to work with Nvidia’s GPUs in the same system. Whether that will help attract more companies away from Nvidia or simply cement Nvidia’s dominance, but on AWS’s cloud, remains to be seen.

It may not matter to Amazon. If it’s already on track to make billions of dollars with the Trainium2 chip, and the next generation will be that much better, it might be a winner enough.

See the latest revelations on everything from agentic AI and cloud infrastructure to security and more from the flagship Amazon Web Services event in Las Vegas. This video is brought to you in partnership with AWS.