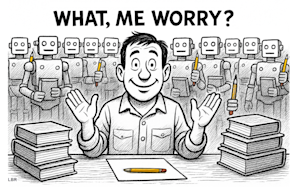

AI denial is becoming an enterprise risk: Why dismissing “slop” obscures real capability gains

Chat GPT was born three years ago. It stunned the world and generated unprecedented investment and enthusiasm in the field of AI. Today, ChatGPT is still a toddler, but public sentiment around the AI boom has turned sharply negative. The shift started when OpenAI released GPT-5 this summer to mixed reviewsmainly from casual users who, unsurprisingly, judged the system based on its superficial flaws rather than its underlying capabilities.

Since then, experts and influencers have declared that progress in AI is slowing, that scaling has “hit the wall,” and that the entire field is just another technology bubble inflated by noisy hype. In fact, many influencers have latched onto the dismissive phrase “AI slop” to diminish the amazing images, documents, videos, and code that advanced AI models generate on command.

This perspective is not only wrong, it is dangerous.

I wonder: where were all these “experts” on irrational technology bubbles when electric scooter startups were being touted as a transportation revolution and cartoon NFTs were being auctioned off for millions? They were probably too busy buying worthless land in the metaverse or adding their positions in GameStop. But when it comes to the AI boom, which is undoubtedly the most important technological and economic transformation factor of the past 25 years, journalists and influencers cannot write the word “slop” often enough.

Aren’t we protesting too much? After all, AI is, by any objective measure, far more capable than the vast majority of humans computer scientists predicted it only five years ago and it’s still improving at a surprising rate. The impressive jump shown by Gemini 3 is just the latest example. At the same time, McKinsey recently reported that 20% of organizations are already getting tangible value from genAI. Also one recent research from Deloitte indicates that 85% of organizations have increased their AI investments by 2025, and 91% plan to increase this again by 2026.

This does not fit with the ‘bubble’ narrative and the dismissive ‘slop’ language. As a computer scientist and research engineer who started working with neural networks in 1989 and has followed its progress through cold winters and hot peaks ever since, I am amazed almost every day by the rapidly increasing capabilities of groundbreaking AI models. When I talk to other professionals in the field, I hear similar sentiments. If there is anything, the pace of progress in AI many experts feel overwhelmed and, frankly, somewhat scared.

The Dangers of AI Denial

So why does the public buy into the narrative that AI is faltering, that its output is “sloppy,” and that the AI tree lacks authentic use cases? Personally, I believe this is because we have entered a collective state of consciousness AI denialwhere we cling to the stories we want to hear despite strong evidence to the contrary. Denial is the first stage of grief and thus a reasonable response to the very disturbing prospect that we humans may soon lose cognitive supremacy here on planet earth. In other words, the exaggerated AI bubble story is a social defense mechanism.

Believe me, I get it. I warned about the destabilizing risks And demoralizing impact of superintelligence for over ten years, and I also think that AI is becoming too smart too quickly. The fact is that we are quickly moving toward a future where widespread AI systems will be able to outperform most humans on most cognitive tasks, solving problems faster and more accurately. more creative than any individual can. I emphasize “creativity” because AI deniers often emphasize that certain human qualities (particularly creativity and emotional intelligence) will always remain beyond the reach of AI systems. Unfortunately, there is little evidence to support this perspective.

In terms of creativity, today’s AI models can generate content faster and with more variety than any individual human. Critics argue that true creativity requires inner motivation. I agree with that argument, but I think it’s circular: we define creativity based on how we experience it, rather than on the quality, originality, or usefulness of the output. Furthermore, we simply don’t know whether AI systems will develop internal drives or a sense of agency. Regardless, if AI can produce original work that rivals most human professionals, the impact on creative jobs will still be quite devastating.

The AI manipulation problem

Our human lead in emotional intelligence is even more precarious. It’s likely that AI will soon be able to read our emotions faster and more accurately than any human. following subtle signals in our micro-expressions, voice patterns, posture, gaze and even breathing. And as we integrate AI assistants into our phones, glasses, and other wearable devices, these systems will monitor our emotional responses throughout the day. build predictive models of our behavior. Without strict regulation, which is increasingly unlikely, these predictive models could be used to target us individually optimized influence that maximizes persuasiveness.

This is called the AI manipulation issue and it suggests that emotional intelligence may not benefit humanity. In fact, it could be a significant weakness, that one asymmetrical dynamics that AI systems can read us with superhuman accuracywhile we cannot read AI at all. When you talk to photorealistic AI agents (en you do) you see a smiling facade designed to appear warm, empathetic and trustworthy. It will look and feel human, but that’s just an illusion, and it easily could be change your perspectives. After all, those are our emotional responses to faces visceral reflexes shaped by millions of years of evolution on a planet where every interactive human face we encountered was actually human. Soon that will no longer be true.

We are quickly moving towards a world where many of the faces we encounter will be those of AI agents hide behind digital facades. In fact, these “virtual spokespersons” could easily have an appearance designed for each of us based on our previous reactions – which also leads us to let our guard down. And yet many insist that AI is just another technology cycle.

This is wishful thinking. The massive investments in AI are not driven by hype; they are driven by the expectation that AI will permeate every aspect of daily life, embodied as intelligent actors that we interact with all day long. These systems will help usteach us and influence us. They will reshape our lives, and it will happen faster than most people think.

To be clear, we are not witnessing an AI bubble filling with empty gas. We see a new planet emerging, a molten world that is quickly taking shape and will harden a new AI-powered society. Denial will not stop this. It will only make us less prepared for the risks.

Louis Rosenberg is an early pioneer in the field of augmented reality and a long-time AI researcher.