Why Agentic AI Requires More Than Better Models

Agentic artificial intelligence (AI) will fundamentally reshape the structure of corporate work and commerce. Rather than simply responding to instructions, these agents actively participate in workflows by planning tasks, creating and using tools, correcting their own errors, and autonomously pursuing multi-step goals. The result is faster, more adaptive workflows. The emergence of the Model Context Protocol (MCP) and the Agent-to-Agent (A2A) protocol represents a significant technical advance, analogous to what Hypertext Transfer Protocol (HTTP) and Representational State Transfer (REST) did for Web services, by providing shared mechanisms for interaction, context exchange, and orchestration. Tool integrations that once required months of work can now be completed automatically.

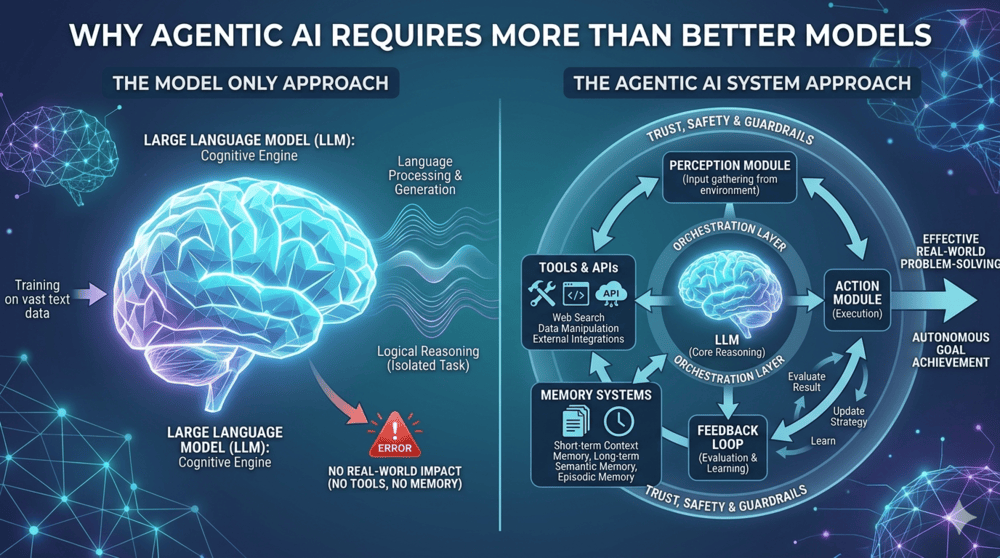

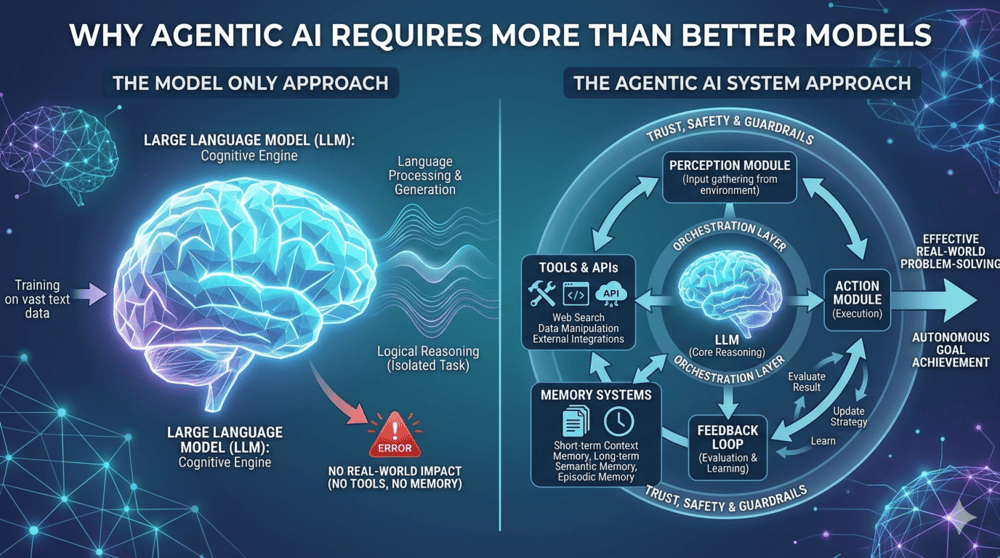

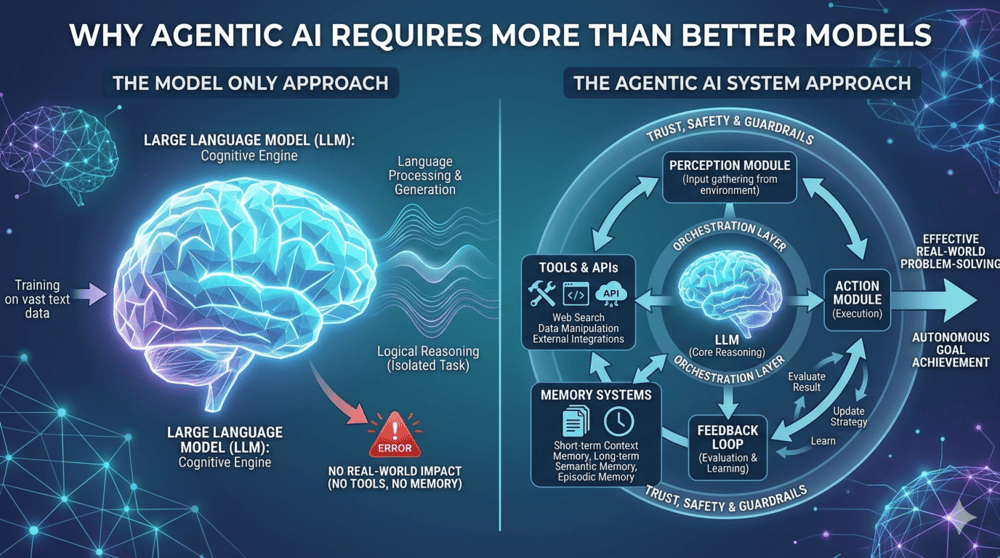

However, without proper organizational constraints, this connectivity introduces a new class of risk. Real-world implementation experience in regulated environments shows that agentic systems can lose their coherent context mid-workflow, confidently produce incorrect results under ambiguous conditions, and fail in ways that are more difficult to detect than traditional software errors. This distributed systems problem will not be solved by smarter AI models, but rather by combining orchestration infrastructure and governance frameworks. Process redesign, not automation, is the path to production-ready, reliable agentic AI systems.

Trajectory of the AI era

OpenAI’s launch of ChatGPT in 2022 marked the beginning of the large language model (LLM) era for large organizations. At the time, most deployed agents were stateless, single-turn systems designed to perform limited tasks. In 2024, Anthropic was released MCP as an open standard for connecting AI systems with data systems. Google followed in 2025 with the A2A protocol, which allows agents to coordinate tasks and share information across multiple platforms. Together, these protocols form complementary layers in the technology stack, accelerating the introduction of agentic AI into enterprise systems.

In 2026, the transition of LLMs to agentic AI represents a technological advancement and a paradigm shift in business workflows. Models have evolved from passive responders to active participants in business processes. Teams of AI agents can access and collaborate across multiple business systems.

Using real-time data such as web searches and IoT (Internet of Things) sensor feeds, agents analyze dynamic data feeds, generate insights, and trigger immediate actions. For example, Walmart has deployed an autonomous inventory agent that detects demand signals and automatically initiates inventory actions. The results include a 22% increase in e-commerce sales in pilot regions and a significant reduction in out-of-stock incidents.

Another feature that distinguishes agentic AI from previous LLMs is the shift from instruction-based to intention-based computing. Developers can now focus on the “what” instead of the “how” by assigning agents tasks and letting them design new workflows that achieve business objectives. Tools like OpenClaw allow users to give agents broad autonomy, alert them to real-world problems, and observe how they identify solutions.

According to McKinsey it is 62% of organizations are experimenting with AI agents, but have not yet deployed them on a large scale. This gap indicates that the race to adopt artificial AI is still open in a way that technology transitions rarely occur at this level of market attention.

Scale depends on orchestration

Companies will close this gap in production implementation by designing new orchestration infrastructures. A key challenge in creating these infrastructures is updating state management processes to handle non-deterministic results. Adopting A2A and MCP is an essential starting point in this process. These protocols enable the transition from stateless agents, which produce discrete outputs without retaining transaction history, to stateful agents, which maintain the memory of past tasks and track the status of running processes.

While stateful AI agents offer exciting new capabilities, they require orchestration environments designed with their strengths and limitations in mind. Tomorrow’s industry leaders are asking, “If an agent were to handle this workflow, how would we redesign the process from scratch?” Anticipating how agents might fail and planning accordingly are critical to this process redesign. The mindset shift from capability-first to failure-mode-first is a clear characteristic that separates mature agentic implementations from those that cause problems on a large scale.

Scaling agentic AI systems is a challenge. That’s why it’s critical that organizations start small and learn from quantifiable test cases before tackling more ambitious projects. Clear inputs, clear transformations, and verifiable outputs are at the heart of a scalable job architecture. For example, in software engineering, Amazon coordinated agents modernize thousands of legacy Java applications through Amazon Q Developer, completing upgrades in a fraction of the expected time. This was only possible because Amazon used test suites and structured data sets that enabled software validation. Tasks are passed or failed, allowing agents to evaluate and repeat their work without human intervention.

The financial services company Ramp launched one AI financing agent in July 2025 that reads company policy documents, independently monitors expenses, identifies violations, generates refund approvals, and verifies supplier compliance. These important governance tasks are based on verifiable data against which agents can be evaluated, making them accountable and transparent.

Governance frameworks enable speed and trust

MCP and A2A accelerate the adoption of agentic AI in complex, distributed workflows, but without strong oversight, these tools can introduce risks, including unpredictable behavior and security vulnerabilities. In less regulated industries, organizations once struggled to justify the upfront costs of data management initiatives. These frameworks are exactly what companies need to mitigate risk and scale agentic AI.

The governance-as-multiplier thesis suggests that strong data management, in addition to improving transparency and security, also increases the speed at which companies can deploy, scale, and benefit from agentic AI. According to one Databricks 2026 reportCompanies that have established AI governance frameworks have released twelve times as many AI projects as competitors without such policies.

Highly regulated industries use AI agents to reduce compliance costs and improve reporting efficiency. In telecommunicationsFor example, agents detect network anomalies, open service tickets, and alert customers in one integrated sequence. Monitoring and reporting Service Level Agreements (SLAs), which previously took a human operator 20 to 40 minutes, is now accomplished in less than two minutes. As these tangible benefits accrue, it becomes clear that disciplined governance is not a barrier to AI adoption, but the foundation that enables its speed, reliability, and scale.

The future of agentic AI depends on infrastructure

AI technology is approaching a new stage of maturity as organizations move from single-turn chatbots to multi-agent orchestration. Shared protocols accelerate this transition through powerful interoperability and new programming paradigms, laying the foundation for complex workflows in distributed systems.

The technical capabilities of agentic AI are developing faster than the underlying governance architectures. While agentic AI tools are powerful, they still lack transparency and accountability. To close this gap, industry leaders are investing in new layers of orchestration and governance that enable agents to collaborate reliably across enterprise systems. There is no simple path to safe, scalable agentic AI. The companies that get the most value from agents are the ones that invest in infrastructure now rather than pursuing isolated, highly visible demonstrations.

About the author: Santoshkalyan (Tosh) Rayadhurgam is head of advanced AI at a financial services platform. Previously at Meta, he led foundational AI efforts, specializing in building production-level AI models, AI agents and systems. He has over 12 years of experience in Stripe, Meta, Lyft and Amazon Lab126. Rayadhurgam holds a master’s degree from Cornell University and a bachelor’s degree from the National Institute of Technology in India. Connect with him LinkedIn.