Nvidia wants to be the Android of generalist robotics

Nvidia released a new stack of robot foundation models, simulation tools and edge hardware at CES 2026, moves that signal the company’s ambition to become the standard platform for generalist robotics, just as Android became the operating system for smartphones.

Nvidia’s move into robotics reflects a broader shift in the industry as AI moves from the cloud to machines that can learn to think in the physical world, enabled by cheaper sensors, advanced simulation and AI models that can increasingly generalize across tasks.

Nvidia on Monday unveiled details about its full-stack ecosystem for physical AI, including new open base models that allow robots to reason, plan and adapt to many tasks and diverse environments, going beyond narrow task-specific bots, all of which are available on Hugging Face.

These models include: Cosmos Transfer 2.5 and Cosmos Predict 2.5, two world models for synthetic data generation and robot policy evaluation in simulation; Cosmos Reason 2, a reasoning vision language model (VLM) that allows AI systems to see, understand, and act on the physical world; and Isaac GR00T N1.6, the next-gen vision language action model (VLA) built specifically for humanoid robots. GR00T relies on Cosmos Reason as its brain, unlocking full-body control for humanoids, allowing them to move and handle objects simultaneously.

Nvidia also introduced Isaac Lab-Arena at CES, an open source simulation framework hosted on GitHub that serves as another component of the company’s physical AI platform, enabling safe virtual testing of robot capabilities.

The platform promises to address a critical challenge for the industry: as robots learn increasingly complex tasks, from precise object handling to cable installation, validating these skills in physical environments can be costly, slow and risky. Isaac Lab-Arena addresses this by consolidating resources, task scenarios, training tools and established benchmarks such as Libero, RoboCasa and RoboTwin, creating a unified standard where the industry previously had none.

The ecosystem is supported by Nvidia OSMO, an open source command center that serves as a connecting infrastructure that integrates the entire workflow from data generation to training in both desktop and cloud environments.

WAN event

San Francisco

|

October 13-15, 2026

And to back it all up, there’s the new Blackwell-powered Jetson T4000 graphics card, the newest member of the Thor family. Nvidia is pitching it as a cost-effective computing upgrade on the device that delivers 1200 teraflops of AI computing power and 64 gigabytes of memory, while running efficiently at 40 to 70 watts.

Nvidia is also deepening its partnership with Hugging Face to let more people experiment with robot training without the need for expensive hardware or specialized knowledge. The partnership integrates Nvidia’s Isaac and GR00T technologies into Hugging Face’s LeRobot framework, connecting Nvidia’s 2 million robotics developers to Hugging Face’s 13 million AI builders. The developer platform’s open source Reachy 2 humanoid now works directly with Nvidia’s Jetson Thor chip, allowing developers to experiment with different AI models without being tied to proprietary systems.

The bigger picture here is that Nvidia wants to make robotics development more accessible, and be the underlying hardware and software vendor that powers it, just as Android is the standard for smartphone makers.

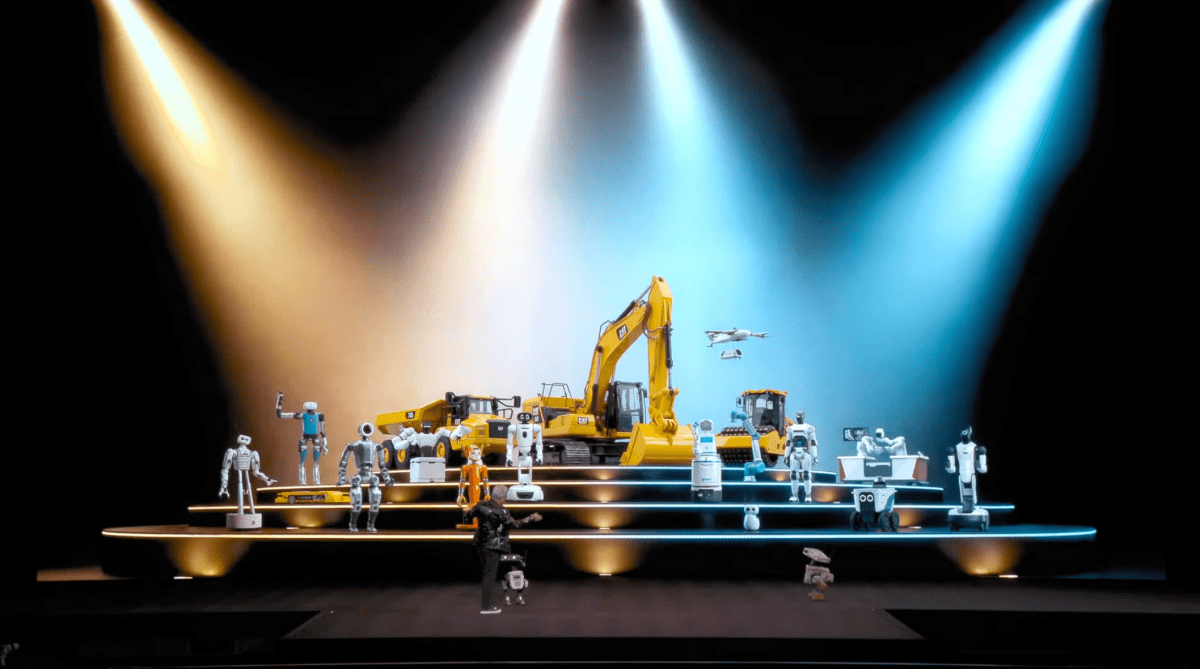

There are early signs that Nvidia’s strategy is working. Robotics is the fastest growing category on Hugging Face, with Nvidia’s models leading downloads. Meanwhile, robotics companies from Boston Dynamics and Caterpillar to Franka Robots and NEURA Robotics are already using Nvidia’s technology.

Follow all of TechCrunch’s coverage of the annual CES conference here.